Scenario: Classic RCT

We call 'Classic Randomized Controlled Trial' (RCT) a scenario where a treatment is randomly assigned to participants, and we do not have pre-experiment data of participants like pre-treatment outcome.

Treatment - new onboarding for new users.

We will test hypothesis:

- There is no difference in conversion rate between treatment and control groups.

- There is a difference in conversion rate between treatment and control groups.

Data

We will use DGP from causalis. More you can read at https://causalis.causalcraft.com/articles/generate_classic_rct_26

| user_id | conversion | d | platform_ios | country_usa | source_paid | m | m_obs | tau_link | g0 | g1 | cate | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 01fc4 | 0.0 | 0.0 | 1.0 | 0.0 | 1.0 | 0.5 | 0.5 | 0.106483 | 0.310620 | 0.333868 | 0.023249 |

| 1 | 0204c | 0.0 | 1.0 | 0.0 | 0.0 | 1.0 | 0.5 | 0.5 | 0.106483 | 0.198257 | 0.215727 | 0.017471 |

| 2 | 002cf | 0.0 | 0.0 | 1.0 | 1.0 | 0.0 | 0.5 | 0.5 | 0.106483 | 0.231969 | 0.251479 | 0.019509 |

| 3 | 0202d | 0.0 | 1.0 | 1.0 | 1.0 | 0.0 | 0.5 | 0.5 | 0.106483 | 0.231969 | 0.251479 | 0.019509 |

| 4 | 011cb | 0.0 | 1.0 | 0.0 | 1.0 | 0.0 | 0.5 | 0.5 | 0.106483 | 0.142189 | 0.155678 | 0.013489 |

Ground truth ATE is 0.01719144406311028

CausalData(df=(10000, 5), treatment='d', outcome='conversion', confounders=['platform_ios', 'country_usa', 'source_paid'])

| treatment | count | mean | std | min | p10 | p25 | median | p75 | p90 | max | |

|---|---|---|---|---|---|---|---|---|---|---|---|

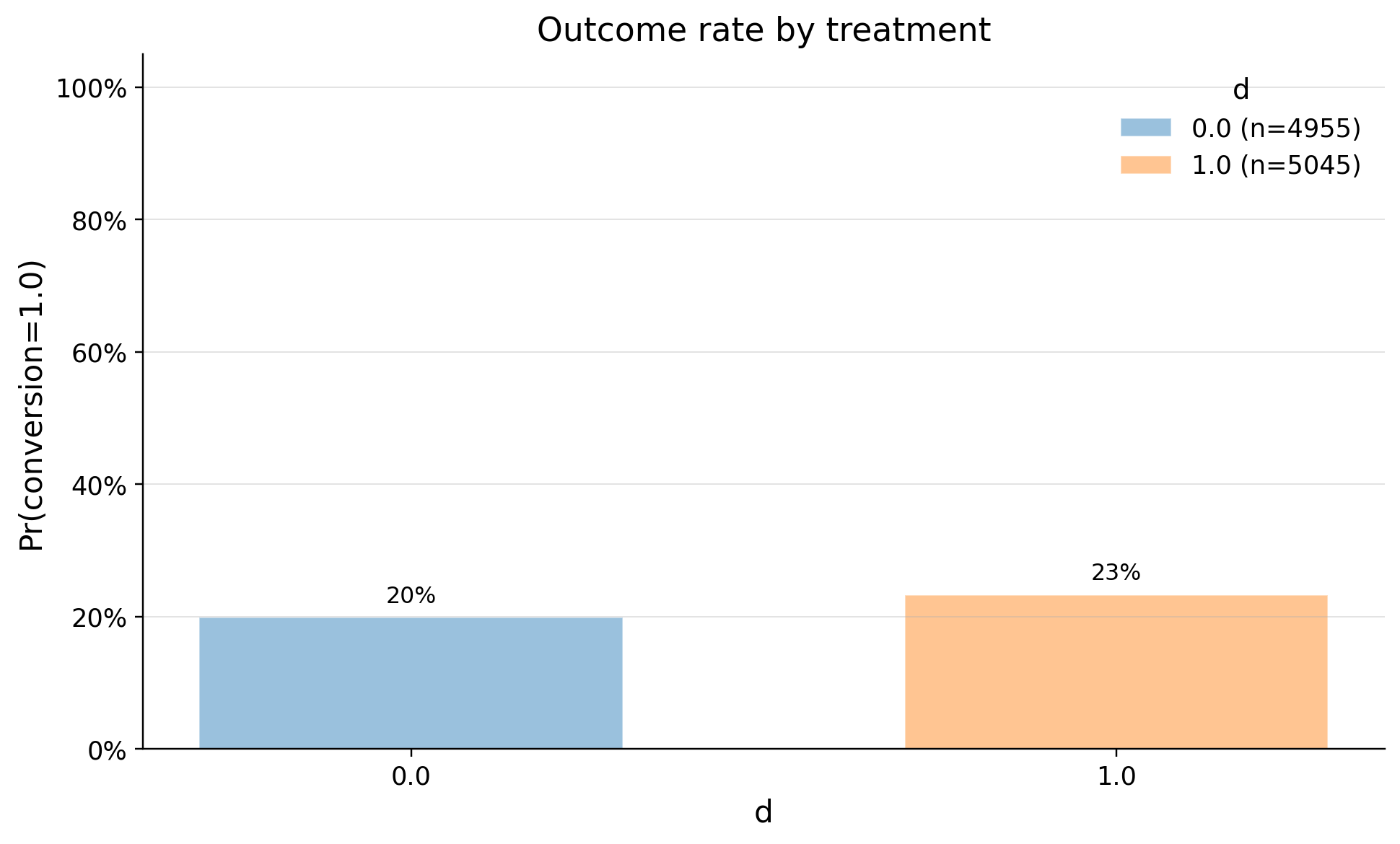

| 0 | 0.0 | 4955 | 0.198991 | 0.399281 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 1.0 | 1.0 |

| 1 | 1.0 | 5045 | 0.232904 | 0.422723 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 1.0 | 1.0 |

Monitoring

Our system is randomly splitting users. Half of them must have new onboarding, other half has not. We should monitor the split with SRM test. Read more at https://causalis.causalcraft.com/articles/srm

SRMResult(status=no SRM, p_value=0.36812, chi2=0.8100)

Check the confounders balance

Are groups equal in terms of confounders? We need to choose with domain and business sense confounders and check balance of them. The standard benchmark:

ks_pvalue< 0.05

| confounders | mean_d_0 | mean_d_1 | abs_diff | smd | ks_pvalue | |

|---|---|---|---|---|---|---|

| 0 | source_paid | 0.299092 | 0.313776 | 0.014684 | 0.031853 | 0.64592 |

| 1 | platform_ios | 0.494046 | 0.502874 | 0.008828 | 0.017654 | 0.98861 |

| 2 | country_usa | 0.586276 | 0.591873 | 0.005597 | 0.011374 | 1.00000 |

As we see system splitted users randomly

Estimation with Diff-in-Means

Inference methods

In Causalis.DiffInMeans model implemented ttest, conversion_ztest and bootstrap:

- use

conversion_ztestwhen users < 100k and outcome is binary - use

bootstrapwhen users < 10k or outcome is ratio metric or your metric is highly skewed - in other cases use

ttest

We will use conversion_ztest for our scenario

conversion_ztest

The conversion_ztest performs a statistical comparison of conversion rates between two groups (Treatment and Control). It provides a p-value for the hypothesis test, and robust confidence intervals for both absolute and relative differences.

1. Observed Metrics

For each group (Control , Treatment ):

- : Total number of observations.

- : Number of successes (conversions).

- : Observed conversion rates.

2. Hypothesis Test (P-value)

The test evaluates (no difference).

- Pooled Proportion:

- Pooled Standard Error:

- Z-Statistic:

- P-value: , where is the standard normal CDF.

3. Absolute Difference (Newcombe CI)

To calculate the confidence interval for the difference , we use the Newcombe method, which is more robust than standard Wald intervals for conversion rates.

- Wilson Score Interval for each group:

- Combined Interval: (where is the critical value for the chosen )

4. Relative Difference (Lift)

Lift measures the percentage change: . The confidence interval uses a delta-method approximation on the lift scale:

- Relative CI:

(If is extremely close to 0, the lift is undefined; the implementation returns inf/0 and NaN for the CI.)

| value | |

|---|---|

| field | |

| estimand | ATE |

| model | DiffInMeans |

| value | 0.0339 (ci_abs: 0.0111, 0.0567) |

| value_relative | 17.0425 (ci_rel: 8.2614, 25.8235) |

| alpha | 0.0500 |

| p_value | 0.0000 |

| is_significant | True |

| n_treated | 5045 |

| n_control | 4955 |

| treatment_mean | 0.2329 |

| control_mean | 0.1990 |

| time | 2026-04-01 |