ASCM Model Diagnostics

This notebook presents the scm diagnostics research workflow and key analysis steps.

1. Setup

First, we generate synthetic data and fit an Augmented SCM model, following the baseline example.

2. Mathematical Foundations: The pre_scale Metric

Before running specific tests, run_scm_diagnostics calculates a baseline variability measure called pre_scale for the treated unit during the pre-treatment period.

Most diagnostic thresholds are defined as a percentage of this scale. This ensures that the "goodness of fit" is judged relative to the inherent volatility of the data.

Robust Scale Estimators

To avoid being misled by outliers or trends, the function calculates four different scale metrics and takes the maximum:

-

Standard Deviation:

-

Median Absolute Deviation (MAD): Scaled to be consistent with for a normal distribution:

-

Interquartile Range (IQR): Also scaled for normal consistency:

-

First-Difference Scale: Calculated using the standard deviation of first differences, useful when there is a trend or high autocorrelation. Since for i.i.d. variables, we scale by :

3. Diagnostic Tests

The diagnostics are divided into fit-based checks and model-based (weight) checks.

A. Fit-Based Diagnostics

-

Pre-treatment RMSE: Checks if the overall fit in the pre-treatment period is tight.

-

Max Absolute Pre-gap: Checks for extreme outliers in the pre-treatment period.

-

Mean Gap Last K Pre-periods: Checks for pre-trend drift right before the treatment (typically ). If the average gap is far from zero, the parallel trends assumption might be failing just as treatment begins.

B. Weight-Based Diagnostics

Augmented SCM (ASCM) allows for weights that can be negative or greater than one to achieve a better pre-treatment fit (extrapolation). However, extreme weights indicate that the counterfactual relies on unstable linear combinations of donors.

-

Max Absolute Weight: Detects extreme individual weights.

-

L1 Norm of Weights: Detects when the total sum of absolute weights is too high.

-

Negative Weight Share: Measures how much the estimate relies on negative weights (pure extrapolation vs. interpolation).

4. Running the Diagnostics

We can now execute run_scm_diagnostics and inspect the results table.

| test | flag | value | threshold | message | |

|---|---|---|---|---|---|

| 0 | pre_rmse_augmented | GREEN | 0.366248 | <= 0.5254 | Pre-treatment RMSE is within tolerance. |

| 1 | max_abs_pre_gap_augmented | GREEN | 0.988290 | <= 1.314 | Largest pre-treatment gap is within tolerance. |

| 2 | mean_gap_last_k_pre_augmented | GREEN | 0.207285 | <= 0.6568 | Average gap in the last 3 pre periods is cente... |

| 3 | max_abs_weight_augmented | GREEN | 0.201943 | <= 2 | No extreme augmented donor weight detected (mo... |

| 4 | l1_norm_weights_augmented | GREEN | 2.245170 | <= 5 | Total absolute augmented weight mass is contro... |

| 5 | negative_weight_share | GREEN | 0.277300 | <= 0.3 | Negative-weight share is moderate. |

| 6 | slsqp_fallback_count | GREEN | 0.000000 | == 0 | No optimizer fallback events recorded. |

| 7 | suppressed_fit_warning_count | GREEN | 0.000000 | == 0 | No suppressed fit warnings were captured. |

5. Interpreting Flags

- GREEN: Metric is within optimal tolerance. The estimate is likely robust.

- YELLOW: Metric exceeds the warning threshold. Consider investigating the pre-treatment fit or donor pool. The estimate might be sensitive to small changes.

- RED: Indicates a severe problem, such as optimizer failure or extreme fit issues. The estimate should be treated with high skepticism.

6. Placebo Tests

Robustness tests check if the treatment effect is unique to the treated unit and time.

A. Placebo-in-Space (Permutation Test)

This test (Abadie et al. 2010) applies the SCM to every unit in the donor pool, treating each donor as the "treated" unit.

- For each donor unit , we fit a synthetic control model using only the remaining donors.

- We calculate the Root Mean Squared Prediction Error (RMSE) for both pre- and post-treatment periods:

- We compute the RMSPE Ratio:

- Interpretation: If the actual treated unit's ratio is much larger than the distribution of for placebo units, it indicates that the effect is statistically significant. The p-value is effectively:

B. Placebo-in-Time (Falsification Test)

This test shifts the treatment start date to an earlier date within the true pre-treatment period.

- We choose and a pseudo-post-horizon .

- We fit the SCM on and measure the "effect" on .

- Since no treatment occurred during this period, the estimated Average Treatment Effect on the Treated (ATT) should be close to zero and statistically insignificant.

- Interpretation: If the null hypothesis (ATT=0) is rejected for many placebo dates, it suggests that the parallel trends assumption is violated (e.g., due to pre-treatment anticipation effects or unobserved trends).

| placebo_treatment_start | n_pre_before_placebo | n_post_after_placebo | average_att_placebo | ci_lower | ci_upper | p_value | rejects_zero | pre_fit_metric | |

|---|---|---|---|---|---|---|---|---|---|

| 0 | 2000-03 | 2 | 6 | 1.882234 | -16.331756 | 20.096223 | 0.414358 | False | 3.892700e-09 |

| 1 | 2000-04 | 3 | 6 | 1.269647 | -2.922810 | 5.462105 | 0.322397 | False | 4.675707e-08 |

| 2 | 2000-05 | 4 | 6 | 1.558211 | -2.782112 | 5.898534 | 0.262430 | False | 7.740750e-09 |

| 3 | 2000-06 | 5 | 6 | 1.314722 | -2.945265 | 5.574709 | 0.315493 | False | 8.664180e-09 |

| 4 | 2000-07 | 6 | 6 | 1.415188 | -1.584680 | 4.415056 | 0.179511 | False | 8.107963e-09 |

7. Sensitivity Analysis

Sensitivity tests assess how dependent the final estimate is on specific assumptions or data subsets.

Leave-One-Donor-Out (LODO) Sensitivity

A common concern in SCM is that the result might be driven by a single, idiosyncratic donor unit. This test (Abadie et al. 2015) re-estimates the model times, each time removing one unit from the donor pool.

- Let be the set of all available donor units.

- For each unit , we fit a new model using .

- We calculate the re-estimated treatment effect and the change relative to the full model:

- Interpretation:

- Small for all indicates a robust estimate.

- A large suggests that donor is highly influential. If that donor's pre-fit was poor or if it has unusual characteristics, the main estimate should be treated with caution.

Effective Number of Donors

To understand how concentrated the weights are, we calculate the Effective Number of Donors (), which is the inverse of the Herfindahl-Hirschman Index (HHI) of the weights:

- : The counterfactual is built using only one donor.

- : All donors have equal weights ().

Lower indicates higher reliance on a small subset of donors.

Interpreting the Results Table

- dropped_donor: Donor unit removed in that refit.

- average_att_reestimated: ATT calculated after removing that donor.

- delta_vs_full_model: . Values near zero indicate the estimate is stable; large values suggest the donor is highly influential.

- pre_rmse_reestimated: Pre-treatment fit error after refit. A significant increase suggests the dropped donor was essential for maintaining fit quality.

- max_weight_after_refit: Largest donor weight in the refitted model.

- effective_n_donors_after_refit: The effective number of donors () for the refitted model.

| dropped_donor | average_att_reestimated | delta_vs_full_model | pre_rmse_reestimated | max_weight_after_refit | effective_n_donors_after_refit | |

|---|---|---|---|---|---|---|

| 0 | donor_1 | 4.207858 | 0.262223 | 0.171405 | 0.303030 | 0.946472 |

| 1 | donor_10 | 4.587795 | 0.642161 | 0.160802 | 0.349188 | 0.909245 |

| 2 | donor_11 | 4.189136 | 0.243502 | 0.160120 | 0.353247 | 0.909496 |

| 3 | donor_12 | 4.916255 | 0.970621 | 0.189167 | 0.336427 | 0.978294 |

| 4 | donor_13 | 4.468127 | 0.522492 | 0.162525 | 0.341735 | 0.927949 |

8. Diagnostic Visualizations

Visualization is a critical step in assessing SCM results. It allows for a qualitative check of the pre-treatment fit and the post-treatment divergence.

Observed vs. Synthetic Plot

This plot displays the time series of the actual treated unit alongside its synthetic counterfactual (both standard and augmented, if available).

- Pre-treatment period: The closer the observed and synthetic lines are, the better the model's fit. A poor pre-fit (large gaps) suggests that the donor pool cannot adequately represent the treated unit.

- Post-treatment period: Divergence between the lines indicates a treatment effect.

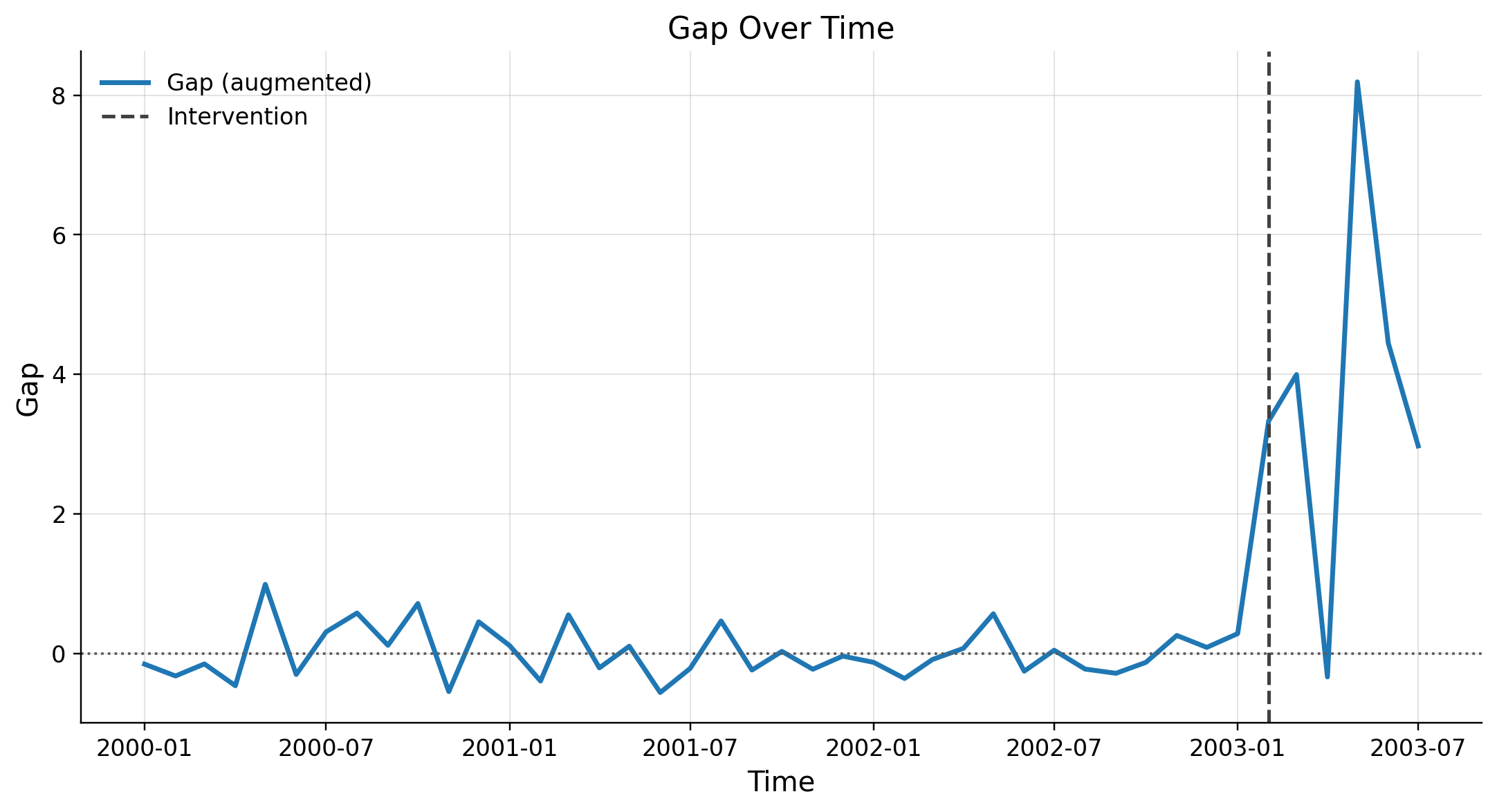

Gap Over Time Plot

The gap plot shows the difference between the observed and synthetic outcomes:

- In the pre-treatment period, the gap should ideally fluctuate closely around zero.

- In the post-treatment period, the gap represents the estimated treatment effect () at each point in time.

Interpreting the Gap:

- If the gap is systematically positive (or negative) after treatment, it suggests a persistent effect.

- If the gap fluctuates around zero even after treatment, it suggests no significant effect.